Structured Content in the Age of LLMs: When and Where It Fits

The Question on the Table

Somewhere in the last two years, a question started surfacing in content strategy meetings that would have sounded strange a decade ago: do we still need structured content?

The question is not unreasonable. Large language models have demonstrated a remarkable ability to work with unstructured text. They summarize documents, generate marketing copy from rough notes, extract meaning from messy data, and answer questions across sprawling knowledge bases. If an LLM can take a pile of unformatted content and produce something useful, the logic goes, why spend time and money modeling content into atomic, reusable components? Why invest in a headless CMS with rigid content types when an AI can apparently make sense of anything?

This is a question worth taking seriously. Organizations that have invested in structured content architectures, headless CMS platforms, atomic content models, and metadata taxonomies are right to ask whether those investments still hold. The cost of maintaining structured content is real: content modeling, editorial workflows, governance overhead, platform licensing. If LLMs have genuinely reduced the need for that structure, continuing to invest in it is a strategic mistake.

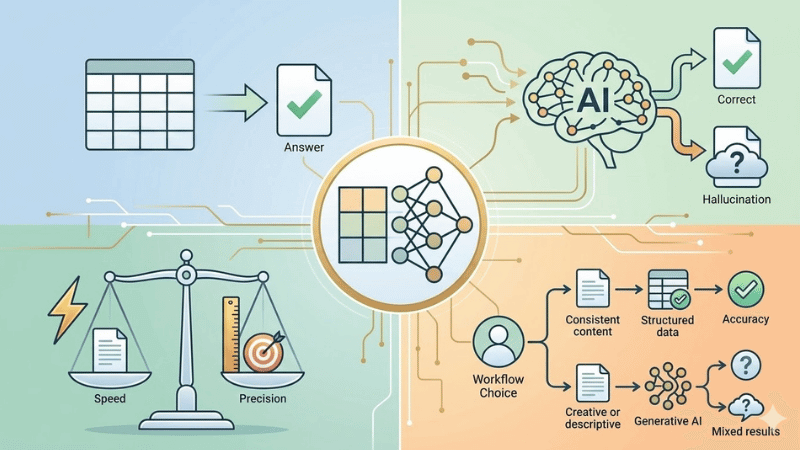

This article does not argue for one side or the other. Instead, it examines what the current evidence says across four dimensions: how well LLMs handle unstructured content, where they fall short, how structure affects retrieval accuracy, and what role structured content plays beyond AI altogether. The goal is a clear-eyed look at the data, followed by a practical framework for deciding when structured content is worth the investment and when it isn’t.

Before going further, some shared definitions. Structured content refers to content organized into predefined models with discrete, typed fields: a product description broken into name, features, price, and image rather than stored as a single block of text. Unstructured content is content without a predefined data model: documents, emails, PDFs, blog posts stored as freeform text. Atomic content is a specific approach to structured content where information is broken into its smallest reusable units, designed to be assembled and reassembled across channels and contexts.

With those terms grounded, here is what the evidence shows.

What LLMs Actually Do Well with Unstructured Content

To evaluate whether structured content is still necessary, it helps to start with an honest accounting of what LLMs can do without it. The capabilities are real, and understating them would undermine the rest of this analysis.

According to IDC, over 80% of enterprise data is unstructured 1. That percentage is growing at 55-65% annually, outpacing structured data growth by three to four times. LLMs were built for this reality. They process natural language without requiring predefined schemas, and they do it across a range of tasks that previously demanded significant human effort.

Summarization is one of the strongest use cases. A 2025 study evaluating 17 large language models across seven benchmark datasets found that leading models achieve high factual consistency scores when summarizing documents 2. Models from Google, OpenAI, and Anthropic all performed well, with the best results showing factual consistency above 98% on standard summarization benchmarks. The practical implication: give an LLM a long, unstructured document and ask it to summarize the key points, and it will do a credible job.

Content generation from unstructured input is similarly strong. LLMs can take scattered notes, rough drafts, and freeform brainstorming and produce polished outputs: blog posts, email campaigns, ad copy, product descriptions 3. Marketing teams in particular have adopted LLMs for exactly this purpose, converting customer reviews, survey responses, and campaign feedback into refined messaging without needing to pre-structure any of the source material.

Sentiment analysis and categorization also work without upfront structuring. LLMs can process customer feedback, support tickets, and social media mentions, identifying themes and sentiment patterns across large volumes of freeform text 3. These are tasks that previously required either manual tagging or purpose-built NLP pipelines.

The pattern across all of these use cases is consistent: when the task is about comprehension, meaning understanding, summarizing, generating, or classifying text, LLMs perform well with unstructured input. They do not need content to be broken into atomic components or organized into typed fields to produce useful output in these scenarios. For organizations whose primary content need is generating marketing copy, summarizing internal documents, or analyzing customer sentiment, the case for heavy investment in content structuring is genuinely weaker than it was five years ago.

That said, comprehension is only one category of content work. The next section looks at what happens when the task shifts from understanding content to reliably extracting and operationalizing it.

Where LLMs Hit a Wall Without Structure

The previous section covered what LLMs do well. This section covers where the evidence shows they do not.

The distinction comes down to a fundamental difference between two types of tasks. Comprehension tasks, such as summarizing a document or generating a response, reward fluency and contextual reasoning. Extraction tasks, such as pulling a specific value from an invoice or categorizing content against a controlled taxonomy, reward deterministic accuracy 4. LLMs are probabilistic systems. They predict the most likely next token based on patterns in training data. That architecture is well suited to the first category and poorly suited to the second.

The data reflects this. A 2025 benchmarking study tested ten leading LLMs on unstructured text categorization using the IAB 2.2 taxonomy across 8,660 samples. The results were modest: average accuracy of 34%, precision of 42%, recall of 45%, and an F1-score of 41% 5. Models frequently hallucinated categories that did not exist in the taxonomy. Ensemble-based approaches improved the results significantly and eliminated hallucinations, but the baseline performance of individual models on a straightforward categorization task was low.

Hallucination remains the central reliability problem. A 2026 research report aggregating data from multiple benchmarks found that global business losses from AI hallucinations reached $67.4 billion in 2024 6. The best-performing models achieve hallucination rates as low as 0.7% on basic summarization, but those rates climb sharply in specialized domains: 18.7% on legal questions, 15.6% on medical queries 6. On the Vectara benchmark’s updated dataset of 7,700 longer, more complex documents, even top models showed hallucination rates between 3.3% and over 10% 6.

A particularly notable finding from MIT research: models are 34% more likely to use confident language, words like “definitely” and “certainly,” when generating incorrect information than when providing accurate responses 6. The implication for enterprise content operations is significant. An LLM processing unstructured content will not flag its own errors. It will present fabricated data with the same tone and confidence as verified facts.

Structured data directly addresses this problem. The same research found that schema-enforced formats, such as JSON or well-modeled content types, reduce hallucination rates by 40-60% compared to processing raw, unstructured text 6. When an LLM operates against content with explicit entity boundaries and typed relationships, there is less room for the model to fill gaps with plausible-sounding fabrication.

The extraction problem is equally documented. LLMs struggle with document layout comprehension, losing positional context that humans use to identify fields 4. Tokenization flattens structured relationships into linear sequences, meaning that a content model’s hierarchy is invisible to the model at the token level 4. Academic benchmarking of structured data extraction found that even state-of-the-art models frequently produce outputs with missing fields, incorrect values, and hallucinated data 7.

None of this means LLMs are unreliable for all tasks. As the previous section showed, they perform well on comprehension and generation. But when the task requires precision, consistency, and structured output from unstructured input, the evidence shows measurable and, in some domains, substantial failure rates.

The Retrieval Gap: Structured vs. Unstructured Knowledge

One of the most common ways organizations use LLMs with their own content is through Retrieval-Augmented Generation, or RAG. In a RAG system, an LLM answers questions by first retrieving relevant content from a knowledge base, then generating a response grounded in that content. It is the backbone of enterprise AI search, customer support bots, and internal knowledge assistants.

The architecture of the knowledge base, whether it stores content as unstructured text chunks or as structured entities with defined relationships, has a measurable impact on the accuracy of the responses.

A 2025 benchmark tested both approaches against 500,000 enterprise documents, including contracts, policies, emails, and reports, running 5,000 queries across five categories 8. The results showed a clear pattern.

For simple lookups, such as “What is the refund policy?”, the difference was minimal. Standard RAG achieved 91% accuracy compared to 94% for GraphRAG, the structured approach that layers a knowledge graph over the content. A 3% gap is unlikely to justify the added infrastructure for most organizations.

The gap widened dramatically for more complex queries:

| Query Type | Unstructured (RAG) | Structured (GraphRAG) | Difference |

|---|---|---|---|

| Simple lookup | 91% | 94% | +3% |

| Multi-hop reasoning | 54% | 89% | +35% |

| Relationship queries | 41% | 87% | +46% |

| Temporal queries | 38% | 82% | +44% |

| Aggregation queries | 62% | 78% | +16% |

| Average | 57% | 86% | +29% |

Multi-hop reasoning, where the system needs to connect information across multiple documents to answer a question like “Which contracts with Acme reference the 2023 amendment?”, saw a 35-point accuracy improvement with structured knowledge. Relationship queries, where the system needs to understand how entities connect, showed a 46-point gap 8.

The structured approach also reduced hallucinations by 40-60% through what the researchers describe as structured fact representation with provenance, meaning the system can trace an answer back through a defined chain of entities and relationships rather than relying solely on semantic similarity between text chunks 8.

The tradeoff is cost and latency. The structured approach averaged 2.2 seconds per query compared to 0.9 seconds for unstructured RAG, and monthly infrastructure costs roughly doubled 8. For simple FAQ-style applications, that overhead is hard to justify. For enterprise knowledge bases handling complex, interconnected content, the accuracy improvement is substantial.

A related finding comes from StructRAG, a 2024 research framework presented at ICLR 2025. StructRAG takes a different approach: rather than requiring content to be pre-structured, it has the LLM reconstruct unstructured documents into structured formats at inference time, selecting the optimal structure type for each query 9. The results achieved state-of-the-art performance on knowledge-intensive reasoning tasks.

The implication is worth noting. Even when the starting material is unstructured, the system performs better when structure is imposed before reasoning. The question is whether that structure is built once at the content layer and reused across every query, or rebuilt on the fly for each individual request. The first approach has higher upfront cost. The second has higher per-query cost and no guarantee of consistency across requests.

What Structured Content Was Always Doing (That LLMs Don’t Replace)

The conversation about structured content and LLMs often centers on AI capabilities: retrieval, generation, extraction. But structured content was never primarily about AI. It was about solving content operations problems that exist regardless of whether an organization uses large language models at all.

Content reuse is the most straightforward example. When a product description is modeled as discrete fields, name, features, specifications, pricing, it can be assembled differently for a website, a mobile app, an email campaign, a print catalog, and a voice assistant without duplicating or rewriting content. When the same description is stored as a single block of freeform text, every channel requires its own version, and keeping them synchronized is a manual process. This was true before LLMs and remains true after them.

The ROI data on structured content reuse is well documented. Research on component content management systems shows ROI figures of over 300% for mid-market companies, up to 687% for large enterprises, and up to 961% for very large enterprises 10. The primary driver is not AI readiness. It is the elimination of redundant content production, reduced translation costs through delta-based updates rather than full-document retranslation, and faster time-to-market across regions and languages.

Governance and compliance represent another category that LLMs do not address. Structured content models enforce rules at the content layer: required fields, controlled vocabularies, approval workflows, audit trails. When a pharmaceutical company needs to ensure that every product page includes a regulatory disclaimer in the correct position, that is a content modeling problem. An LLM can generate the disclaimer text, but it cannot enforce that the field exists, that it was reviewed, or that it meets regulatory requirements across markets.

Localization at scale depends on structured content as well. When content is atomic, translation workflows process only the components that have changed, significantly reducing both cost and turnaround time. Unstructured content requires translators to work with entire documents, even when only a paragraph has been updated.

Omnichannel delivery is perhaps the most practical consideration. Organizations delivering content to websites, native apps, kiosks, chatbots, and wearables need content that is presentation-independent. Structured content, separated from its display logic, can be delivered through APIs to any frontend. Unstructured content, typically formatted for a single channel, requires transformation for each additional touchpoint.

None of these problems are new. None of them were created by the limitations of AI. And none of them are solved by an LLM’s ability to process unstructured text. They are operational, organizational, and architectural challenges that structured content was designed to address. The question of whether structured content is still needed cannot be answered solely by evaluating LLM capabilities, because LLM capabilities were never the full picture.

The Emerging Picture: Structure as AI Infrastructure

The previous sections examined structured and unstructured content through the lens of current LLM capabilities and existing content operations needs. This section looks at where the trajectory is heading, based on what major technology companies and industry analysts are investing in.

In November 2024, Anthropic open-sourced the Model Context Protocol, or MCP, a universal standard for connecting AI assistants to external data sources 11. The protocol provides a single interface through which AI agents can read from and write to content repositories, business tools, and databases. By December 2025, MCP had been adopted by OpenAI’s ChatGPT, Google Gemini, Microsoft Copilot, and Cursor, among others. Over 10,000 public MCP servers were deployed, with 97 million monthly SDK downloads across Python and TypeScript. Anthropic subsequently donated the protocol to the Linux Foundation, with co-founding support from OpenAI, Google, Microsoft, AWS, and Bloomberg 11.

The relevance to structured content is direct. MCP servers expose data through defined schemas. AI agents that connect through MCP interact with content as typed entities with explicit relationships, not as raw text. The protocol’s architecture assumes that the systems it connects to have structured, queryable data. Content stored as unstructured blobs is harder to expose through MCP in a way that agents can reliably act on.

Forrester’s 2026 predictions project that 30% of enterprise application vendors will launch MCP servers, enabling external AI agents to collaborate across platforms without vendor lock-in 12. Separately, Gartner forecasts that 40% of enterprise applications will embed AI agents by the end of 2026, up from 5% in 2025 12. The scale of this shift is significant. Enterprise software is being redesigned around the assumption that AI agents will be primary consumers of data alongside human users.

This creates a practical consideration for content architecture. If AI agents are going to interact with an organization’s content, and the industry trajectory suggests they will, that content needs to be available in formats agents can work with reliably. Structured content models, with typed fields, defined relationships, and API-accessible repositories, are natively suited to this. Unstructured content stored in documents and wikis can still be processed by agents, but with the accuracy limitations documented in earlier sections.

The pattern emerging across the industry is not that structured content is being replaced by AI. It is that structured content is becoming the infrastructure layer that AI systems depend on. Organizations that have already invested in content modeling, headless CMS platforms, and atomic content architectures are finding that those investments translate directly into AI readiness. Organizations that have not are facing a structuring challenge before they can fully leverage AI agents at scale.

A Framework for Deciding

The evidence reviewed in this article points to a consistent conclusion: the question is not whether to use structured or unstructured content, but when each approach is the better fit. The following framework summarizes the patterns that emerged across the research.

Unstructured content is likely sufficient when:

- The primary task is content generation, such as drafting marketing copy, blog posts, or internal communications from rough inputs

- The content is single-use or short-lived, not intended for reuse across channels or regions

- The use case involves summarization or synthesis, where LLMs perform reliably on unstructured inputs

- Retrieval needs are simple, limited to single-fact lookups where standard RAG achieves over 90% accuracy

- The content does not carry compliance, regulatory, or audit requirements

Structured content is likely essential when:

- Content must be delivered across multiple channels, devices, or platforms from a single source

- The organization operates across regions or languages and needs efficient localization workflows

- Retrieval requirements include complex queries, multi-hop reasoning, or relationship-based lookups, where structured knowledge bases outperform unstructured ones by 29-46 percentage points

- Governance, compliance, or audit trails are required at the content level

- Content reuse at scale is a strategic priority, where structured models have demonstrated ROI of 300% or higher

- AI agents will interact with the content, where structured, schema-defined data is natively compatible with emerging integration standards like MCP

The questions to ask internally:

- How many channels does our content serve? If the answer is more than one, the reuse case for structured content is immediate.

- What types of queries will our AI systems need to answer? If they involve connecting information across documents or understanding relationships between entities, structured knowledge significantly outperforms unstructured.

- What are our governance requirements? If content must be auditable, version-controlled, or compliant with regulatory standards, those requirements live at the content model layer, not the AI layer.

- How long does our content live? If it is created once and discarded, structuring it may add overhead without proportional return. If it is maintained, reused, translated, or repurposed, structure pays for itself.

- Are we planning to integrate AI agents into our content workflows? If so, the investment in structured content is also an investment in AI infrastructure.

There is no single right answer. An organization might reasonably use unstructured workflows for campaign content and structured models for product information, regulatory content, and knowledge bases. The two approaches are not in competition. They serve different operational realities.

What has changed is that the decision now has a new input. LLMs have expanded the range of tasks that can be performed on unstructured content, and that is worth factoring into content architecture decisions. What has not changed is that the core value of structured content, reusability, consistency, governance, and multi-channel delivery, exists independently of AI capabilities. The evidence does not support abandoning structured content because LLMs are capable. It supports being more deliberate about where each approach delivers the most value.

“The Untapped Power of Unstructured Data in Enterprise AI.” Forbes. https://www.forbes.com/councils/forbestechcouncil/2025/11/24/the-untapped-power-of-unstructured-data-in-enterprise-ai/ ↩︎

Multiple authors. “An Empirical Comparison of Text Summarization: A Multi-Dimensional Evaluation of Large Language Models.” arxiv.org. https://arxiv.org/abs/2504.04534 ↩︎

“From Chaos to Clarity: How LLMs Transform Unstructured Data.” storytell.ai. https://web.storytell.ai/blog/from-chaos-to-clarity-how-llms-transform-unstructured-data ↩︎ ↩︎

Aman Mishra. “Why LLMs Struggle with Structured Data Extraction from Unstructured Documents.” medium.com. https://medium.com/@aman005mishra/why-llms-struggle-with-structured-data-extraction-from-unstructured-documents-7e2af6be60b0 ↩︎ ↩︎ ↩︎

Multiple authors. “Order from Chaos: Comparative Study of Ten Leading LLMs on Unstructured Data Categorization.” arxiv.org. https://arxiv.org/html/2510.13885v1 ↩︎

Suprmind. “AI Hallucination Statistics: Research Report 2026.” suprmind.ai. https://suprmind.ai/hub/insights/ai-hallucination-statistics-research-report-2026/ ↩︎ ↩︎ ↩︎ ↩︎ ↩︎

“LLMStructBench: Benchmarking Large Language Model Structured Data Extraction.” arxiv.org. https://arxiv.org/html/2602.14743v1 ↩︎

Muhammad Mudassir. “RAG vs GraphRAG: When to Use Each (With Benchmarks).” cognilium.ai. https://cognilium.ai/blogs/rag-vs-graphrag ↩︎ ↩︎ ↩︎ ↩︎

“StructRAG: Boosting Knowledge Intensive Reasoning of LLMs via Inference-time Hybrid Information Structurization.” arxiv.org. https://arxiv.org/html/2410.08815v2 ↩︎

“Measuring ROI of Structured Content: Translation & Reuse.” net-effect.com. https://net-effect.com/measuring-roi-of-structured-content/ ↩︎

Anthropic. “Introducing the Model Context Protocol.” anthropic.com. https://www.anthropic.com/news/model-context-protocol ↩︎ ↩︎

Forrester. “Predictions 2026: AI Agents And New Business Models Impact Enterprise Software.” forrester.com. https://www.forrester.com/blogs/predictions-2026-ai-agents-changing-business-models-and-workplace-culture-impact-enterprise-software/ ↩︎ ↩︎

About the Author

Marcelo Lewin, Founder @ iBuildWith.ai

Marcelo is the founder of iBuildWith.ai and a Principal Content Architect and Builder at Cigna. He has over 30 years of experience in the tech industry. Having lived through the computer, internet, and mobile revolutions, he is now excited and focused on navigating the AI revolution by helping non-developers build real applications using AI. He founded several startups and held roles at various companies including Toyota, NBC, J.F. Shea, and Walt Disney Imagineering.